Bruce Ciummo, Education Effectiveness Specialist wrote on LinkedIn:

This will continue to happen until we humanize AI within education by intentionally reinforcing power skills in the curriculum.

I replied:

Bruce Ciummo Interesting. Can you expand on what you mean by “humanize AI”?

Bruce Ciummo, Education Effectiveness Specialist replied:

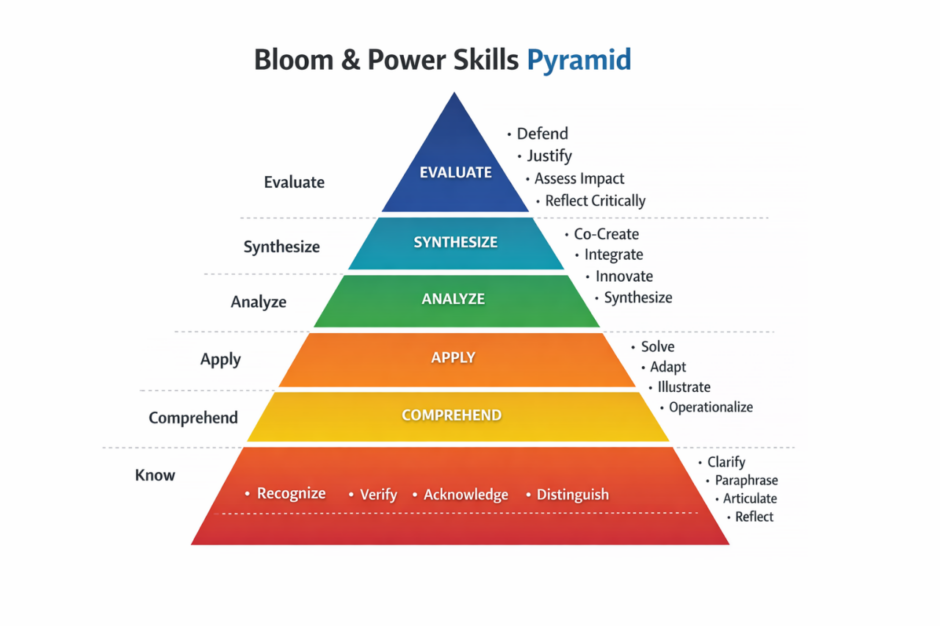

We need to start overlaying power skills verbs with bloom’s

I replied:

I am confused. Humanize AI = Robots in a humanoid form factor?

If am understanding your cryptic responses, you are saying that humanize AI means it needs to have the capabilities on the Bloom & Power Skills Pyramid?

How does simulating these verbs humanize AI?

It’s similar to anthropomorphizing. Dogs are not human, yet we can project human qualities upon them. There is overlap on some areas, but that doesn’t make dogs (or other animals) humans.

Update: Apparently AI anthropomorphism is already a thing: https://en.wikipedia.org/wiki/AI_anthropomorphism

Bruce Ciummo, Education Effectiveness Specialist replied:

I see your point, but my view is pretty simple.

AI is increasingly handling many of the traditional Bloom’s activities like summarizing, analyzing, and evaluating. Because of that, education needs to intentionally embed power skills into SLOs and assignments.

Skills like defending ideas, solving problems, co-creating, and reflecting critically are the human capabilities that become even more important in an AI-driven world. The goal isn’t to replace Bloom’s—it’s to reinforce it with the skills AI can’t easily replicate.

I replied:

Thank you. That makes more sense now.

But why use AI in the educational journey? The intensity and depths someone goes to learn something is beneficial for learning and reinforcement. AI, in most cases, is a crutch and shortcut that will only remove the struggle, which is essential, to education and learning.

Without work, your muscles get soft. Including your brain.

Bruce Ciummo, Education Effectiveness Specialist wrote:

You wouldn’t do bicep curls to strengthen your quads. Different outcomes require different muscles. AI is the same way—it shifts which “muscles” matter.

For years, education has optimized for memorization, test scores, and GPA. But the skills AI cannot easily replicate—critical thinking, leadership, communication, adaptability, and judgment—are the ones that now matter more.

Many schools say they teach these power skills, but often it’s at a surface level. High school incentives revolve around ACT/SAT scores and GPA. In college, power skills may show up in co-curricular activities, but they are rarely embedded deeply into coursework and assessment.

That’s the real issue: we’re out of alignment with what employers and entrepreneurs actually need.

AI isn’t the problem. It’s exposing where the skill gaps already existed.

I replied:

I don’t think your analogy on working out applies to humans using AI. A more applicable one would be: You wouldn’t have someone else do squats to work your quads.

The optimization you are referring to is schooling. Not education. Those are two distinct pursuits. As Grant Allen, usually misattributed to Twain, said in different variations: “Never let schooling interfere with education.”

Just because the modern school (and the systems that regulate it) focuses on memorization, test scores, and GPA, doesn’t mean the students are being educated. In fact, that has often been the critique of both public and private schools.

“…critical thinking, leadership, communication, adaptability, and judgment” have always been the goal of caring, thoughtful, and educated parents. And its these same parents who are ultimately responsible for educating their children.

Again, this is a question of outsourcing: parents have outsourced the education (and more) of their children to schools.

AI didn’t expose anything we didn’t already know regarding the failures of modern schooling. And AI won’t save parents and students either.

You can’t build your brain if you outsource all of your thinking.